Threat Landscape is Changing

The Crimean crisis showed Russia’s ‘new generation warfare’ capability or, as NATO described it, ‘hybrid warfare’ including propaganda, deception, sabotage and other non-military tactics.

These tactics were used before. The difference was in their level of intensity, scale and speed, all made possible due to available technology, which was a leading threat vector vice a supporting element as in the past.

According to the Multinational Capability Development Campaign (MCDC) in their report Countering Hybrid Warfare Project1 ‘… our common understanding of hybrid warfare is underdeveloped and therefore hampers our ability to deter, mitigate and counter this threat’.

This is not a surprise, knowing that most of these hybrid elements, such as cyberattacks, are launched with the latest technology and include other hybrid elements, like deception and propaganda.

An effective response requires, therefore, new technologies and doctrines to achieve a rapid response and enable NATO to take the initiative before the adversary is able to execute its plan.

Getting Inside the OODA Loop

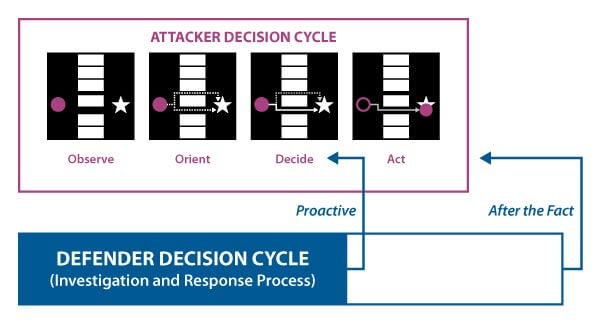

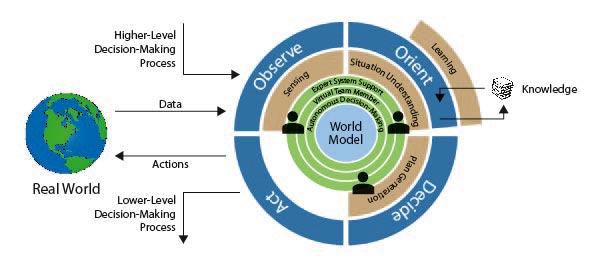

The OODA loop is an acronym for the cycle ‘Observe – Orient – Decide – Act’ as developed by the United States Air Force Colonel John Boyd, where they applied the concept to the combat operations process, often at the operational level, during military campaigns.2 The approach explains how agility can overcome raw power in dealing with human opponents.

By following that principle, the response to hybrid threats should be to make decisions better and faster in order to outmanoeuvre the adversary. This means getting inside the attacker’s OODA loop will rapidly increase our chances to win by taking the initiative before the attack takes place. Knowing the hybrid attacks of various adversaries, there are lessons to be learned from the responses taken by commercial organizations to hybrid attacks. The ‘hybrid battlefield’ and cyberattacks in particular, are, as we know, not limited to military targets.

There are, of course, differences as commercial organizations are, by law, not allowed to initiate offensive actions. So, all effort is focused on defence. The objective for commercial organizations is to get inside the adversary’s OODA loop by taking the initiative and make the costs for the adversary to attack the commercial organization so high, that the Return on Investment (ROI) is not attractive enough to proceed. This course of action means having an impact on the decision of the adversary before the attack (Act) is launched.

Information Dominance

Sun Tzu wrote 2,000 years ago in the ‘Art of War’3 about the importance of information dominance: ‘If you know the enemy and know yourself you need not fear the results of a hundred battles.’ This means that our analysts need to provide assessments better and faster to assist decision-makers to enable them to outmanoeuvre the adversary. The introduction of hybrid warfare with cyberattacks changed the thinking that manpower alone is enough to gain information dominance.

Skyrocketing volumes of data from more and more sensors expedited this requirement, as all the analysts in the world would not be enough to translate the volume of available information into predictions and help make the best decisions in time.

How Machine Learning can Support Command and Control

Machine learning (ML) is potentially a valuable way to analyse large data sources/signals and predict what is expected to happen, thus enabling organizations to take the initiative before an attack takes place.

With ML you can establish your own data model (algorithms) with specific instructions for performing a task. Predicting, for instance, an adversary’s attack vector in such a way depends on the quality of the algorithm, and the volume and quality of the available data.

Computing power, in combination with ML, helps to overcome the human limitations of using large data sets because it:

- scales beyond the limits of human capabilities and expertise;

- shines a light in areas undetectable by humans (blind spots);

- helps staff automate routine tasks, avoiding wasted effort.

As with many disruptive innovations, ML presents risks and challenges that could affect authenticity of the information provided to commanders and the outcomes of processes and technologies that use it. ML algorithms basic risks may include:

- amplification of human bias;

- inadvertently reveals private/secret information;

- missing critical context and implications (e.g. confuse innocent ‘John Smith’ with another ‘John Smith’ with the same birthdate but a criminal record);

- feeding false/malicious data.

These deficiencies could undermine the decisions, predictions or analysis ML applications produce, subjecting us to legal liability and other harm. Some ML scenarios present an ethical dilemma like for example a form of small drones that are able to be deployed, and unlike current military drones, be able to make decisions about killing others without human approval based on a certain algorithm.

In an ideal world, we would have the best-designed algorithms (ML) to minimize these risks. In combination with the highest quality and volume of data, high computing power will enable us to provide the best predictions for organizations to affect Command and Control (C2) and to execute an effective OODA loop.

Nevertheless, the reality is that prediction depends on human adaptability to situations and the rationale/logic used for decision-making. Besides, the lack of quality data and comprehensive algorithms require that humans evaluate and understand the more complex situations and possible attacks.

Making Better Decisions, Faster, from a Commercial Cyber Operation

ML can be a great value within the C2 process when done in the right way using an example of a commercial cyber operation that integrated ML successfully in their C2 process based on three doctrines:

Maximize visibility (minimize blind spots and ensure you have good coverage of sensors)

Internal – Minimize internal blind spots by ensuring you have good coverage (as close to 100% as you can manage) of all asset types. (e.g. identities, data centres, email).

External – Ensure you have a diversity of threat feeds from sources that give insight and context about the external environment. (e.g. malware, compromised identities, attack websites).

Reduce manual steps (and errors)

Automate and integrate as many manual processes as possible to remove unneeded human actions that lead to delays and potential human errors.

Maximize human impact

For the places in the process where it makes sense to have human interaction (e.g. difficult choices, new decisions), you should ensure that your analysts have access to extensive expertise and intelligence to make better decisions.

Additionally, ensure learning is integrated throughout the process, up to and including consideration of when you would watch an attack unfold to learn its objective (long term value) versus blocking the attack (short term value) or a combination of the two by directing an adversary to a honey pot where the characteristics can be studied without causing harm.

Improving the Impact by Including Synthetic Data and Augmented Reality

Synthetic data is increasingly used when creating ML applications in the training environment by involving object detection, where the synthetic environment builds a 3D environment of the object that is used for learning to navigate environments by visual information.

You can understand that this addition can be a powerful tool in the C2 environment when predicting attack vectors by understanding terrain challenges and weather conditions.

The following synthetic data types can be included for this purpose:

- image (review picture and video);

- voice (voice and noise detection);

- text (text analysis);

- hybrid (powerful combinations of the above data types to improve accuracy and context).

An interesting example to demonstrate the advantage is a Search and Rescue operation where time is critical to find survivors/victims and where challenges include:

- Difficulty finding survivors in rescue situations where low light, weather, or complex terrain are factors (i.e. forests and oceans).

- There is too much information for the human eye to process in a short time frame.

- Resource bottlenecks requiring creative solutions to maximize effectiveness.

With the support of ML and synthetic data it is possible to search very specifically with the best chance to find the survivors/victims:

- Providing machines with data allows them to create algorithms for identifying objects.

- These algorithms can be used to scan photos, videos, and audio data to look for survivors/victims.

- It is useful when compliance and privacy issues exist regarding storing, accessing, and computing ‘real’ data.

Synthetic data is supporting scenarios where collateral damage assessment or other impacts of events can be presented. A clear example of this might be in displaying that a server farm is compromised and out of operation and limiting information for the commander.

The commander wants to know the answer to the question ‘so what?’, for instance, that the downtime of a compromised server farm immediately causes delays in the delivery of emails to his operation for at least one hour or, even worse, creates an incomplete situational awareness image.

The impact of these kinds of scenarios can be made more prominent when Augmented Reality (AR) devices are introduced to the command post. Being able to present all information including possible impacts by AR devices can speed up the Commander’s clear understanding of the situation and enables faster decision-making. AR seamlessly blends holograms and the real world, (like for the above scenario) where operators on the ground can project overlays that display important associated information, helping them gain a clearer understanding of the situation at hand.

Reduce Time and Complexity in Decision-Making with Machine Learning and Augmented Reality

ML and AR will be able to provide the analysts and commanders additional Artificial Intelligence (AI) services that are unlocked by the usage of these new technologies. This will have a positive impact on the following parts of the C2 process:

- Course of Action options (more precise and much faster);

- analyze patterns and anomalies in data to take actions;

- improved force readiness by aggregating siloed and open source data for intelligence analysis;

- automatic classification and processing of visual data such as reconnaissance images or training video;

- automated translation and transcription for better interaction in multinational forces and expeditionary missions.

The question that arises is can AI take over the C2 process in the future?

For the near future, we will see ML driving a process which should always be managed by humans. In practice, this means that ML will drive the ‘no brainer, very logical’ decisions and execute them quickly, and, as always, under the authority and responsibility of humans. The more complex decisions will always require human agreement before execution, where ML can provide advice on what to do.

The quality of ML decisions continues to depend upon the availability, volume and quality of data and on the quality of the algorithms.

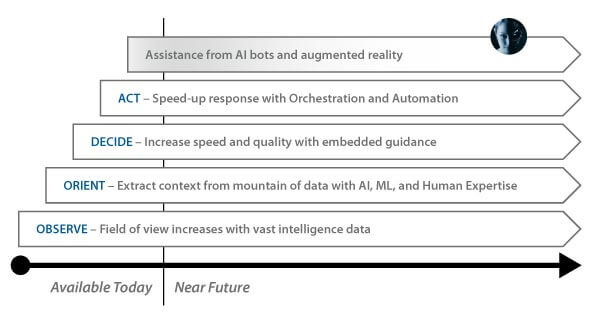

Evolution Trajectory of (Cyber) Command and Control

The main advantage that we see in the evolution of C2 is that the ‘Mean Time To Remediation (MTTR)’ decreases by optimizing expert human decisions in a faster way.

Below we can see Microsoft’s expectation on this evolution where the evolution of C2 will continue and is expected to be brought to a new level by the introduction of AI bots and AR, which is expected to further decrease the MTTR.

Technology will continually improve as will the ability and speed at which analysts and incident responders detect and remediate incidents. The speed of evolution will be influenced by the ability of humans to accept and trust the outcome of the prediction algorithms in such a way that they will feel comfortable to make important decisions.

The idea that, in a relatively short time, AI will become superior to human intelligence was popularized by the well-known futurist, Ray Kurzweil argued in his 2005 book ‘The Singularity Is Near: When Humans Transcend Biology’4 that by 2045 ‘It may be true that new technologies are slowly replacing certain cognitive tasks, just like machines replaced physical labour during the Industrial Revolution’.

The future will tell us if the outcome of battles will depend on the best AI technology, therefore I would like to conclude my article with a quote from Microsoft’s Chief Executive Officer (CEO) Satya Nadella on his perspective on how AI should develop:5

‘We’ve seen how AI can be applied for good, but we must also guard against its unintended consequences. Now is the time to examine how we build AI responsibly and avoid a race to the bottom. This requires both the private and public sectors to take action.’